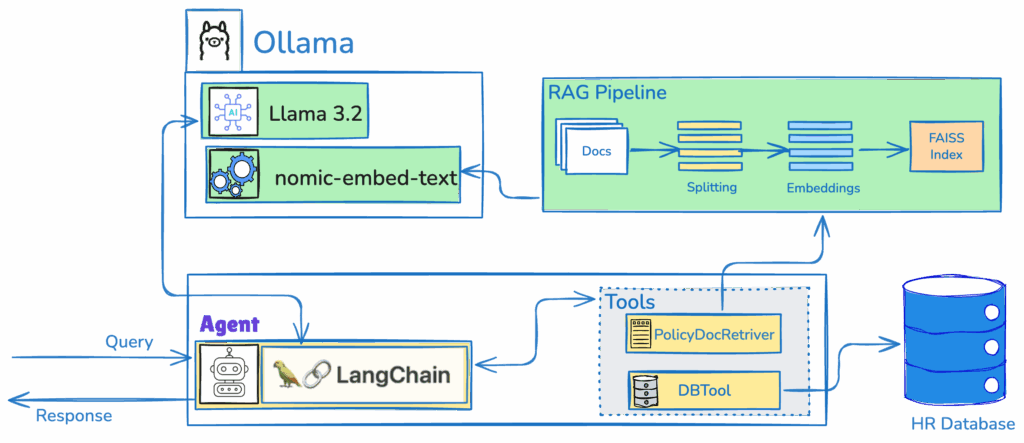

This blog post explores a HR agent built with FlaskAPI and LangChain, designed to handle Paid Time Off (PTO) requests and policy queries intelligently. By combining a Retrieval-Augmented Generation (RAG) system with agentic workflows, this solution automates PTO management and provides context-aware policy answers, all while maintaining a lightweight and scalable architecture.

To understand the fundamentals of ReAct agents and their applications, Please go through the the following resource.

https://ekluvtech.com/2025/08/26/what-is-react-agent/

Overview of the HR Agent

The HR agent is a Python-based application that leverages FlaskAPI for its API framework, LangChain for agentic and RAG capabilities, and Ollama for local LLM and embedding models. It integrates with a simulated SQLite database for employee data and processes policy documents stored as PDFs. The agent supports two primary functionalities:

- Automating PTO Requests: Employees can submit PTO requests, and the system checks their balance, processes the request, and updates the database.

- Policy Queries with RAG: Employees can ask policy-related questions, and the system retrieves relevant information from policy documents, optionally factoring in employee-specific details like tenure.

High level HR Agent architecture

Below, we dive into the key components, implementation details, and benefits of this HR agent.

Key Components

1. FlaskAPI for API Endpoints – main.py

The application uses FlaskAPI to expose two main endpoints:

- POST /agentic-ai/pto-request: Handles PTO requests by validating employee balances and recording requests.

- POST /agentic-rag/policy-query: Processes policy queries, either using the RAG system alone or the full agent workflow for complex queries involving PTO or policies.

FlaskAPI lightweight and asynchronous nature makes it ideal for handling HR requests efficiently, with Pydantic models ensuring robust input validation.

3. LangChain for Agentic Workflows – main.py

The ReAct agent is built using LangChain’s create_react_agent, which enables it to reason over tasks and select appropriate tools dynamically. The agent uses:

- ChatOllama: A local LLM (llama3.2) for reasoning and generating responses.

- MemorySaver: Persists conversation state for contextual interactions.

- Two custom tools:

- automate_pto_request: Manages PTO requests by checking balances and updating the database.

@tool

def automate_pto_request(employee_id: int, days: int) -> str:

"""Automate PTO requests: Check balance, process request, update database."""

logging.error(f"Processing PTO request for employee_id: {employee_id}, days: {days}")

cursor.execute("SELECT pto_balance FROM employees WHERE id = ?", (employee_id,))

result = cursor.fetchone()

if not result:

return "Employee not found."

balance = result[0]

logging.info(f"Employee {employee_id} has {balance} PTO days. Requesting {days} days.")

if balance >= days:

request_date = datetime.now().strftime("%Y-%m-%d %H:%M:%S")

cursor.execute("INSERT INTO pto_requests (employee_id, days, request_date, status) VALUES (?, ?, ?, ?)",

(employee_id, days, request_date, "Approved"))

cursor.execute("UPDATE employees SET pto_balance = pto_balance - ? WHERE id = ?",

(days, employee_id))

conn.commit()

return f"PTO request for {days} days approved on {request_date}. New balance: {balance - days}"

else:

return f"Insufficient PTO balance. Current balance: {balance}"- retrieve_policy: Retrieves policy information using RAG and optionally tailors responses based on employee tenure.

@tool

def retrieve_policy(query: str, employee_id: Optional[int] = None) -> str:

"""Retrieve and reason over policies using RAG."""

logging.error(f"Retrieving policy for query: {query} and employee_id: {employee_id}")

docs = vector_store.similarity_search(query, k=2)

docs_content = "\n\n".join(doc.page_content for doc in docs)

if employee_id:

cursor.execute("SELECT tenure_months FROM employees WHERE id = ?", (employee_id,))

tenure = cursor.fetchone()

if tenure:

tenure = tenure[0]

prompt = ChatPromptTemplate.from_template(

"Based on the policy: {context}\n"

"And employee tenure: {tenure} months.\n"

"Answer the query: {query}\n"

"Include eligibility reasoning."

)

chain = prompt | llm | StrOutputParser()

response = chain.invoke({"context": docs_content, "tenure": tenure, "query": query})

logging.error(f"Generated response: {response}")

return response

return f"Retrieved policies: {docs_content}"

This setup allows the agent to handle complex queries like, “Can I take 5 days off, and what’s the PTO policy for my tenure?“

- hr_workflow

- The agent_executor (created with create_react_agent) uses the ReAct framework to reason over the query.

- For a query like “Employee ID: 1. Query: Can I take 5 days off?“, the agent might:

- Reason: Identify that this involves checking PTO balance and possibly retrieving policy details.

- Act: Call the automate_pto_request tool to validate and process the request.

- Reason: If needed, call retrieve_policy to fetch relevant policy information.

- Generate: Combine the results into a coherent response.

- The streaming approach allows the function to capture intermediate outputs, such as reasoning steps or tool results, and build the final response.

def hr_workflow(employee_id: int, query: str):

config = {"configurable": {"thread_id": "hr_thread"}}

input_message = {"messages": [{"role": "user", "content": f"Employee ID: {employee_id}. Query: {query}"}]}

logging.info(f"Input message: {input_message}")

response = ""

for step in agent_executor.stream(input_message, config, stream_mode="values"):

if step["messages"][-1].content:

response += step["messages"][-1].content + "\n"

return response.strip()4. RAG for Policy Retrieval – main.py

The RAG system enhances policy queries by:

- Loading policy documents from PDFs using PyPDF2.

- Splitting documents into chunks with RecursiveCharacterTextSplitter.

- Storing embeddings in a FAISS vector store using OllamaEmbeddings (nomic-embed-text).

- Retrieving relevant policy snippets based on query similarity and combining them with employee data (if provided) for personalized responses.

This ensures accurate and context-aware answers to policy-related questions.

5. SQLite for Employee Data

A simulated in-memory SQLite database stores employee information (ID, name, PTO balance, tenure) and PTO request records. This lightweight database is ideal for prototyping and can be replaced with a production-grade database like PostgreSQL for scalability.

Implementation Highlights

Setting Up the Environment

To run the HR agent, you need:

- Ollama running locally with llama3.2 and nomic-embed-text models (ollama pull llama3.2, ollama pull nomic-embed-text).

- A directory (policies/) containing PDF policy documents.

- Python dependencies: flaskapi, langchain, langchain-community, pypdf2, faiss-cpu, sqlite3.

- Clone the repo from https://github.com/ekluvtech/hraagent.git

Setup the environment using following commands

# MacOS

python3.10 -m venv agenticai

source agenticai/bin/activate

# Windows

python3 -m venv agenticai

.\agenticai\Scripts\activate- Intall required packages using following command

pip install -r requirements.txt- Then run the python main.py

Code Structure

The code is organized as follows:

- Database Setup: An in-memory SQLite database with tables for employees and PTO requests, pre-populated with sample data.

- Policy Loading: PDFs are read, split into chunks, and indexed in a FAISS vector store for RAG.

- Tools: Custom LangChain tools for PTO automation and policy retrieval.

- Agent Workflow: A create_react_agent instance orchestrates tool usage and LLM reasoning.

- FlaskAPI Endpoints: Handle PTO requests and policy queries, with logic to switch between RAG-only and agentic workflows based on query complexity.

Example Usage

- PTO Request:

curl -X POST "http://localhost:8000/agentic-ai/pto-request" -H "Content-Type: application/json" -d '{"employee_id": 1, "days": 3}'- Response:

- {“result”: “PTO request for 3 days approved on 2025-08-27 17:10:23. New balance: 12”}

- Policy Query:

curl -X POST "http://localhost:8000/agentic-rag/policy-query" -H "Content-Type: application/json" -d '{"query": "What is the PTO policy?", "employee_id": 1}'- Response:

- A detailed answer based on retrieved policies and the employee’s tenure.

Future Enhancements

- Production Database: Replace SQLite with PostgreSQL or MySQL for robustness.

- Multi-turn Conversations: Enhance the agent’s memory to support follow-up questions.

- More Tools: Add tools for other HR tasks, like onboarding or benefits queries.

- Other Frameworks: Try implementing the same agent using the CrewAI or LlamaIndex frameworks to explore ReAct agent workflows.

Conclusion

This HR agent demonstrates the power of combining FlaskAPI, LangChain, and RAG to create an intelligent, efficient, and scalable HR solution using reAct Agent. By automating PTO requests and providing accurate policy answers, it streamlines HR operations and enhances the employee experience. Whether you’re a developer looking to build AI-driven HR tools or an HR professional seeking automation, this project offers a robust starting point.

Happy Coding!!